Slido / Cisco — AI Feature Design

Making meetings smarter, one poll at a time

Meetings lose value when discussion doesn't lead to decisions. I led the design sprint that generated this concept, then continued as lead UX designer as it moved through POC — designing an AI assistant that suggests Slido polls based on what's happening live in the meeting.

Role

Design Sprint Lead, Lead UX Designer

Scope

Concept to POC

Company

Slido / Cisco

Duration

Sprint + ongoing

Context

This project grew out of the vision work

Earlier in 2025, I developed a product vision for Slido — defining where the product should go as part of a combined Cisco/Webex strategy. Part of that work identified a clear opportunity: Slido's value wasn't reaching people who needed it most, because they didn't know it was there.

The cross-org design sprint was the next step. We used the vision as a foundation to explore what a more proactive, AI-native Slido experience could look like — across the full meeting lifecycle, and across the Webex ecosystem.

The Sprint

From eleven interviews to four concepts

Getting nineteen people across three timezones to converge on anything useful required the problem space to be sharp before the sprint started. I synthesised findings from eleven expert interviews spanning customer success managers, meeting hosts, executives, and enterprise admins. The complaints were varied. The pattern underneath them wasn't: people knew when a meeting needed engagement, but the friction of getting there was enough to make them give up.

Those findings shaped three How Might We statements — one each for pre-meeting, during, and post. Keeping it to three was deliberate. Too broad and the week fragments; too narrow and you're designing a feature, not exploring a space. During the sprint I directed the design work, set the quality bar, and sketched ideas that made it through directly into the prototype.

19

Participants across

EMEA, US, APAC

11

Expert interviews synthesised

pre-sprint

4

Concepts from

the sprint

3

HMW statements:

pre, during, post

From research synthesis to structured problem framing to concept generation.

Four outputs. One for Slido to own.

The sprint converged on four concepts spanning the meeting lifecycle. Each was tied to a different team. As the Slido design lead, I took forward the concept Slido could control end-to-end.

Proactive AI Assistant

Auto-captures notes, action items, and insights during the meeting — no prompts needed.

Webex

Timing Reminders

Heads up when time is almost up — so hosts can wrap confidently without overrunning.

Webex

AI Generated Slido

AI reads the live transcript and suggests Slido polls at the right moment.

Slido

This case study

Meeting Recap

Vidcast clips, Slido polls, action items, and insights in one shareable summary.

Slido / Vidcast / Webex

The sprint prototype

The sprint produced a high-fidelity prototype covering all four concepts. We tested it with customers across the participant range — the AI-generated polls concept consistently produced the strongest response.

The Problem

The issue wasn't that people didn't want to engage. It was that the moment passed before they could.

The expert interviews revealed something specific: hosts knew when engagement would help — a decision point, a tense discussion, a room that had gone quiet. They just couldn't act fast enough. Creating a Slido poll mid-meeting takes too long. By the time it's ready, the moment is gone.

The secondary problem was awareness. Many Webex users had no idea Slido was integrated at all. The feature existed. It just wasn't surfacing itself when it mattered.

Both problems point to the same solution: don't wait for the host to initiate. Detect the moment and bring the tool to them.

“If even the friction is taking 15 seconds, they would say, you know what, let’s not do it. We’ll do it next time.”

“They want to have more interaction during their meetings, but they actually don’t build interaction into their meetings.”

How it works

Read the room. Suggest the poll.

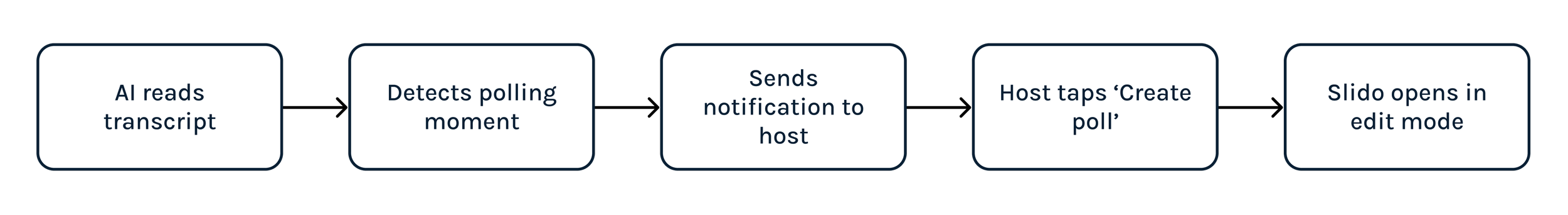

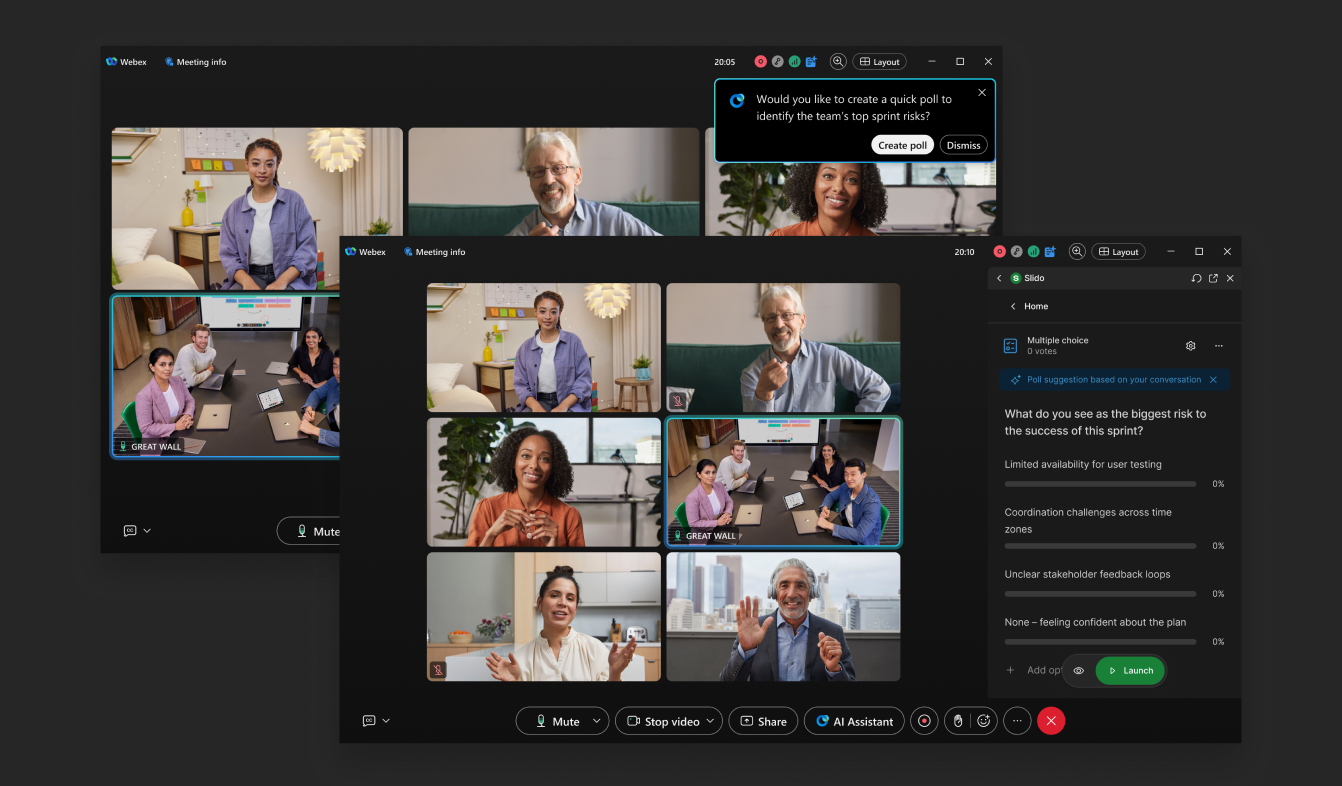

Webex AI Assistant reads the live meeting transcript, identifies moments where engagement would add value, and suggests a Slido interaction to the host. The AI does the detecting. The host stays in control — reviewing, editing if needed, and launching in seconds.

The system detects three types of meeting moment: icebreakers, decision points, and open Q&A signals. Three is deliberate — enough range to be useful, narrow enough for the AI to be accurate rather than speculative.

Design

Control, speed, and not getting in the way

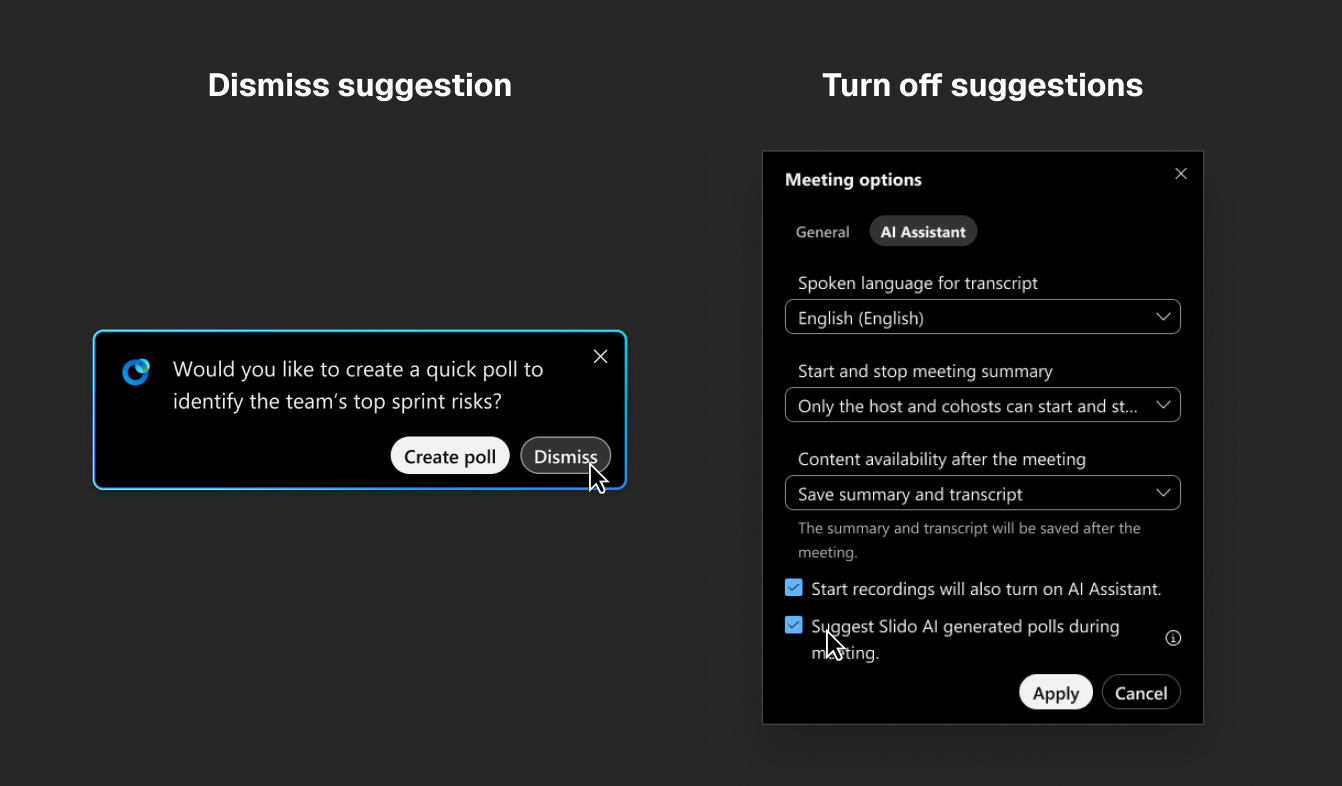

One question shaped every decision: does the host feel helped, or interrupted? The notification pattern, the edit-before-launch default, the suggestion frequency — all of it came back to that.

The suggestion

A Webex notification with a single call to action. Tapping it opens Slido in edit mode with a pre-filled draft — host adjusts and launches, or dismisses. Control stays with them throughout.

The guardrails

Hard caps prevent fatigue: max three suggestions per meeting, min eight minutes between each, confidence threshold before firing, backoff on repeated dismissals.

Prototype

See it in action

The prototype walks through the full AI polls flow — transcript detection, notification, Slido sidebar opening in edit mode, host launching the poll.

Validation

What testing told us

The feedback that mattered most wasn't the enthusiasm. It was the hosts who described the exact moment the feature was designed for — the decision that stalled, the question nobody asked, the discussion that ran long because nobody called a vote. They recognised the problem before they'd seen the solution. When the prototype showed the suggestion appearing mid-meeting, the response was recognition, not surprise.

“It might pick up on it quicker than my brain would. I mean, I can imagine even just from being an attendee — if a poll popped up during a conversation and gave me an opportunity to provide some input, I would feel very seen in that scenario. I feel like it would be worth it and have much more positive effects than negative effects.”

“The AI assistant would suggest a quick poll — people at the meeting give updates, everybody gives opinions, we are sometimes lost in all the discussion. This can be a good thing, to stop and reflect.”

Outcomes

From sprint concept to active development

The concept is in POC. What that means in practice: the dev team is tuning the model, and I'm designing alongside them — covering the states and interactions that only become visible once the AI is running on real transcripts. The sprint got us from problem to validated concept in a week. The harder work is making it reliable enough to ship.

19

Sprint participants across EMEA, US, APAC

Senior Leadership, PM, Design, Research

2

Concepts taken to POC

Slido AI polls & Meeting Summary

In development

Model accuracy being refined

Design continues alongside dev team

Success metrics defined for launch

Part of post-sprint work was defining what success looks like — not just for the feature, but for the AI behaviour specifically. These targets will shape the model tuning before launch.

Leading indicators

→ Suggestion acceptance rate ≥ 25%

→ Time-to-launch from suggestion ≤ 15 seconds

→ Dismissal rate ≤ 40%

Lagging indicators

+15–20% lift in meetings using Slido in AI cohort

+10–15% lift in responses per poll

+0.2–0.4 uplift in post-meeting CSAT

Quality guardrails

→ Irrelevant suggestion rate ≤ 10%

→ Max 3 suggestions per meeting

→ 8–10 min cooldown between suggestions

Reflection

What this reinforced

Guardrails are a design problem, not an engineering one

The fatigue guardrails needed as much design thinking as the interaction itself. Suggestion caps, cooldowns, backoff logic — these aren't technical constraints to hand off. They're the difference between a feature that feels trustworthy and one that gets switched off after three meetings.

Vision creates direction; sprints create momentum

The sprint had a clear brief because the vision work had already defined where Slido was going. That sequence mattered. Nineteen people across three continents converged quickly because the problem space was already shaped.

Designing for AI uncertainty is its own discipline

When the AI fires at the wrong moment, or doesn't fire when it should, the design has to handle that gracefully. Optimistic interfaces assume the system works. This one had to account for the full range of model behaviour — which meant designing states and responses that most prototypes never reach.

The next opportunity: post-meeting analytics

Post-meeting engagement tracking is something I wanted to push further, and still am. Knowing which poll moments landed, which were dismissed, and how engagement varied across meeting types would make the AI smarter over time. This is something I'm actively advocating for across the Slido and Webex teams.